SpringBoot集成Langchain4j-RAG语意增强会话

前面两篇文章已经做好了AI对话和向量库的准备,并且存入了一批向量化的房产数据。

·

前面两篇文章已经做好了AI对话和向量库的准备,并且存入了一批向量化的房产数据

**检索:**查询向量库

**增强:**增强用户的提示词Prompt

**生成:**结合LLM

RAG 的本质是一种“增强策略”(Augmentation Strategy),向量库只是实现它的一种(常用但非唯一)手段。

AI对话代码

Controller

// 当前使用:Flux + SSE【前端也得用sse】

@RequestMapping(value = "/role/stream/sse", produces = MediaType.TEXT_EVENT_STREAM_VALUE)

public Flux<String> roleStreamSse(@RequestParam(defaultValue = "你好,介绍下你是谁") String prompt, @RequestParam long chatId) {

RequestContext context = RequestContextHolder.getContext();

return Flux.<String>create(sink -> ThreadPoolUtil.getThreadPool().submit(() -> {

RequestContextHolder.setContext(context);

try {

String userName = context.getUserName();

taskManager.startTask(sink, chatId, userName, prompt, DateFormat.getDateInstance().format(new Date()));

} finally {

// 防止内存泄漏

RequestContextHolder.clear();

}

})).delayElements(Duration.ofMillis(50));

}

@GetMapping("/cancel")

public String cancelChat(@RequestParam long chatId) {

taskManager.cancelTask(chatId);

return "ok";

}

AI对话管理类

@Slf4j

@Component

public class TaskManager {

private final Map<Long, FluxSink<String>> sinks = new HashMap<>();

private final Map<Long, AtomicBoolean> tasks = new ConcurrentHashMap<>();

@Autowired

private AiConfig.AssistantUnique assistant;

public void startTask(FluxSink<String> currentSink, long chatId, String userName, String prompt, String timestamp) {

sinks.put(chatId, currentSink);

AtomicBoolean cancelled = new AtomicBoolean(false);

tasks.put(chatId, cancelled);

try {

long start = System.currentTimeMillis();

TokenStream stream = assistant.roleStream(chatId, userName, prompt, timestamp);

stream.onPartialResponse(chunk -> {

if (cancelled.get()) return; // 已取消则不再发送

currentSink.next(chunk);

})

.onCompleteResponse(response -> {

if (cancelled.get()) return;

currentSink.complete();

})

.onError(err -> {

if (cancelled.get()) return;

currentSink.error(err);

})

.start();

currentSink.onDispose(() -> {

currentSink.complete();

this.cancelTask(chatId);

log.info("手动终结会话");

});

long end = System.currentTimeMillis();

log.info("任务执行完成,耗时:{} ms", (end - start));

} catch (Exception e) {

if (!cancelled.get()) {

e.printStackTrace();

}

} finally {

tasks.remove(chatId);

}

}

public void cancelTask(long chatId) {

AtomicBoolean cancelled = tasks.get(chatId);

if (cancelled != null) {

cancelled.set(true);

tasks.remove(chatId);

}

sinks.remove(chatId);

}

}

AI配置类

package com.blue.basic.ai.config.chat;

import com.blue.basic.ai.tool.AiToolService;

import com.blue.basic.ai.tool.RoleConstant;

import dev.langchain4j.memory.chat.ChatMemoryProvider;

import dev.langchain4j.memory.chat.MessageWindowChatMemory;

import dev.langchain4j.model.ollama.OllamaStreamingChatModel;

import dev.langchain4j.rag.content.retriever.ContentRetriever;

import dev.langchain4j.service.*;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import java.util.HashMap;

import java.util.Map;

@Configuration

public class AiConfig {

// 自定义不同用户的聊天会话AI助手

public interface AssistantUnique {

String chat(@MemoryId long memoryId, @UserName String userName, @UserMessage String message);

TokenStream stream(@MemoryId long memoryId, @UserName String userName, @UserMessage String message);

@SystemMessage(RoleConstant.SYSTEM_PROMPT)

TokenStream roleStream(@MemoryId long memoryId, @UserName String userName, @UserMessage String message, @V("current_date")String currentDate);

}

@Bean

public OllamaStreamingChatModel ollamaStreamingChatModel() {

Map<String, String> map = new HashMap<>();

map.put("Content-Type", "application/json;charset=utf-8");// 处理乱码问题

return OllamaStreamingChatModel.builder()

.baseUrl("http://127.0.0.1:11434")

.modelName("qwen3:4b")

.temperature(0.8)

.logRequests(true)

.logResponses(true)

.customHeaders(map)

.build();

}

@Bean

public AssistantUnique assistantUnique(OllamaStreamingChatModel ollamaStreamingChatModel,

AiToolService toolService,

ChatMemoryPersistent chatMemoryPersistent,

ContentRetriever contentRetriever) {

// 使用本地大模型

ChatMemoryProvider chatMemoryProvider = memoryId -> MessageWindowChatMemory.builder()

.maxMessages(10)

.id(memoryId)

.chatMemoryStore(chatMemoryPersistent)

.build();

return AiServices.builder(AssistantUnique.class)

.tools(toolService)

.chatMemoryProvider(chatMemoryProvider)

.streamingChatModel(ollamaStreamingChatModel)

.contentRetriever(contentRetriever)

.build();

}

}

RAG检索增强

只需要在TaskManager中,将用户输入的Prompt,进行增强,进行RAG做向量检索

public void startTask(FluxSink<String> currentSink, long chatId, String userName, String prompt, String timestamp) {

sinks.put(chatId, currentSink);

AtomicBoolean cancelled = new AtomicBoolean(false);

tasks.put(chatId, cancelled);

try {

// RAG 检索相关上下文

String enhancedPrompt = enhancePromptWithRag(prompt);

long start = System.currentTimeMillis();

TokenStream stream = assistant.roleStream(chatId, userName, enhancedPrompt, timestamp);

// ... 其余代码保持不变

} catch (Exception e) {

// ... 异常处理

}

}

/**

* RAG增强

*/

private String enhancePromptWithRag(String originalPrompt) {

// 生成问题嵌入

Embedding questionEmbedding = embeddingModel.embed(originalPrompt).content();

// 搜索相关文档

EmbeddingSearchRequest request = EmbeddingSearchRequest.builder()

.queryEmbedding(questionEmbedding)

.maxResults(3)

.build();

EmbeddingSearchResult<TextSegment> searchResult = embeddingStore.search(request);

List<EmbeddingMatch<TextSegment>> relevantSegments = searchResult.matches();

// 如果没有找到相关文档,返回原始提示

if (relevantSegments.isEmpty()) {

return originalPrompt;

}

// 构建增强后的提示

StringBuilder contextBuilder = new StringBuilder();

for (EmbeddingMatch<TextSegment> match : relevantSegments) {

contextBuilder.append(match.embedded().text()).append("\n\n");

}

return String.format(

"基于以下上下文信息回答问题:\n\n%s\n问题: %s",

contextBuilder,

originalPrompt

);

}

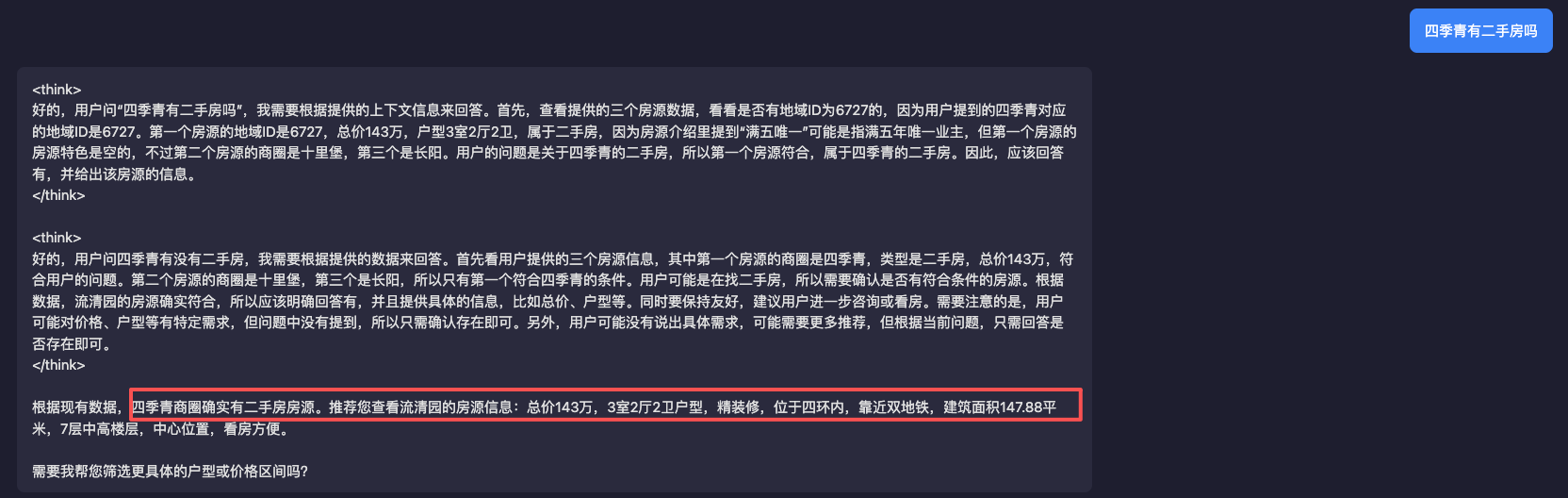

效果

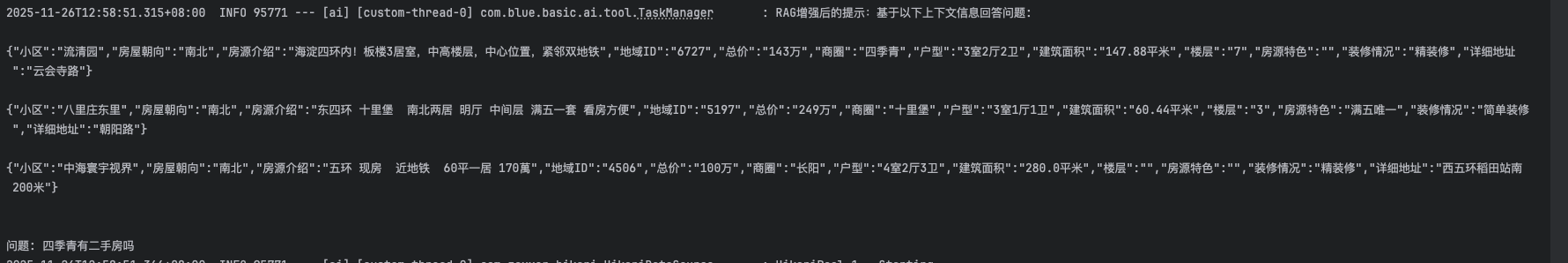

日志

自此,RAG增加AI会话的目的就达到了,其中think的过程有些坎坷,是因为我使用了@Tool做会话识别的增强;

思考

创建Milvus的集合过程中,使用界面化工具Attu操作时趟了一些坑,比如Langchain4j中的id类型不能创建为int,需要改成varchar,metadata字段一直找不到,和Langchain4j的设置对不上,以及RAG检索增强的时候,text字段为空,这是我后来补的字段,小问题还挺多的;万幸,终将fix it,有效的减少了会话的幻觉治理问题~

火山引擎开发者社区是火山引擎打造的AI技术生态平台,聚焦Agent与大模型开发,提供豆包系列模型(图像/视频/视觉)、智能分析与会话工具,并配套评测集、动手实验室及行业案例库。社区通过技术沙龙、挑战赛等活动促进开发者成长,新用户可领50万Tokens权益,助力构建智能应用。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)