Logstash安装以及使用

Logstash 是 Elastic Stack 的中央数据流引擎,用于收集、丰富和统一所有数据,而不管格式或模式当与Elasticsearch,Kibana,及 Beats 共同使用的时候便会拥有特别强大的实时处理能力Logstash 管道中的每个输入阶段都在其自己的线程中运行。输入将事件写入位于内存(默认)或磁盘上的中央队列。每个管道工作线程从此队列中取出一批事件,通过配置的过滤器运行这批事件

Logstash介绍:

Logstash 是 Elastic Stack 的中央数据流引擎,用于收集、丰富和统一所有数据,而不管格式或模式当与Elasticsearch,Kibana,及 Beats 共同使用的时候便会拥有特别强大的实时处理能力

Logstash 管道中的每个输入阶段都在其自己的线程中运行。输入将事件写入位于内存(默认)或磁盘上的中央队列。每个管道工作线程从此队列中取出一批事件,通过配置的过滤器运行这批事件,然后通过任何输出运行过滤后的事件。批次的大小和管道工作线程的数量是可配置的。

默认情况下,Logstash 使用管道阶段(输入 → 过滤器和过滤器 → 输出)之间的内存有界队列来缓冲事件。如果 Logstash 不安全地终止,则存储在内存中的所有事件都将丢失。为了防止数据丢失,可以启用 Logstash 将传输中的事件保存到磁盘。

Logstash安装:

参考官方网站 Logstash![]() https://www.elastic.co/guide/en/logstash/current/index.html

https://www.elastic.co/guide/en/logstash/current/index.html

下载软件

# curl -OL https://artifacts.elastic.co/downloads/logstash/logstash-8.15.0-linux-x86_64.tar.gz

# tar -xf logstash-8.15.0-linux-x86_64.tar.gz -C /usr/local/

# mv /usr/local/logstash-8.15.0/ /usr/local/logstash

更新java环境

[root@logstash jdk]# java -version

openjdk version "11.0.15" 2022-04-19 LTS

# vim /etc/profile #追加如下配置

JAVA_HOME=/usr/local/logstash/jdk

PATH=$JAVA_HOME/bin:$PATH

export JAVA_HOME PATH

[root@logstash jdk]# source /etc/profile

[root@logstash jdk]# java -version

openjdk version "21.0.4" 2024-07-16 LTS测试运行

运行最基本的Logstash管道来测试Logstash安装。

Logstash管道具有两个必需元素input和output,以及一个可选元素filter。输入插件使用来自源的数据,过滤器插件根据你的指定修改数据,输出插件将数据写入目标。

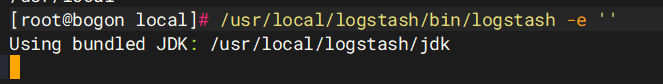

[root@bogon local]# /usr/local/logstash/bin/logstash -e ''

Using bundled JDK: /usr/local/logstash/jdk

等待启动

[root@bogon local]# /usr/local/logstash/bin/logstash -e ''

Using bundled JDK: /usr/local/logstash/jdk

Sending Logstash logs to /usr/local/logstash/logs which is now configured via log4j2.properties

[2025-11-07T20:48:37,224][INFO ][logstash.runner ] Log4j configuration path used is: /usr/local/logstash/config/log4j2.properties

[2025-11-07T20:48:37,227][WARN ][logstash.runner ] The use of JAVA_HOME has been deprecated. Logstash 8.0 and later ignores JAVA_HOME and uses the bundled JDK. Running Logstash with the bundled JDK is recommended. The bundled JDK has been verified to work with each specific version of Logstash, and generally provides best performance and reliability. If you have compelling reasons for using your own JDK (organizational-specific compliance requirements, for example), you can configure LS_JAVA_HOME to use that version instead.

[2025-11-07T20:48:37,228][INFO ][logstash.runner ] Starting Logstash {"logstash.version"=>"8.15.0", "jruby.version"=>"jruby 9.4.8.0 (3.1.4) 2024-07-02 4d41e55a67 OpenJDK 64-Bit Server VM 21.0.4+7-LTS on 21.0.4+7-LTS +indy +jit [x86_64-linux]"}

[2025-11-07T20:48:37,230][INFO ][logstash.runner ] JVM bootstrap flags: [-Xms1g, -Xmx1g, -Djava.awt.headless=true, -Dfile.encoding=UTF-8, -Djruby.compile.invokedynamic=true, -XX:+HeapDumpOnOutOfMemoryError, -Djava.security.egd=file:/dev/urandom, -Dlog4j2.isThreadContextMapInheritable=true, -Dlogstash.jackson.stream-read-constraints.max-string-length=200000000, -Dlogstash.jackson.stream-read-constraints.max-number-length=10000, -Djruby.regexp.interruptible=true, -Djdk.io.File.enableADS=true, --add-exports=jdk.compiler/com.sun.tools.javac.api=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.file=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.parser=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.tree=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.util=ALL-UNNAMED, --add-opens=java.base/java.security=ALL-UNNAMED, --add-opens=java.base/java.io=ALL-UNNAMED, --add-opens=java.base/java.nio.channels=ALL-UNNAMED, --add-opens=java.base/sun.nio.ch=ALL-UNNAMED, --add-opens=java.management/sun.management=ALL-UNNAMED, -Dio.netty.allocator.maxOrder=11]

[2025-11-07T20:48:37,233][INFO ][logstash.runner ] Jackson default value override `logstash.jackson.stream-read-constraints.max-string-length` configured to `200000000`

[2025-11-07T20:48:37,233][INFO ][logstash.runner ] Jackson default value override `logstash.jackson.stream-read-constraints.max-number-length` configured to `10000`

[2025-11-07T20:48:37,240][INFO ][logstash.settings ] Creating directory {:setting=>"path.queue", :path=>"/usr/local/logstash/data/queue"}

[2025-11-07T20:48:37,242][INFO ][logstash.settings ] Creating directory {:setting=>"path.dead_letter_queue", :path=>"/usr/local/logstash/data/dead_letter_queue"}

[2025-11-07T20:48:37,596][WARN ][logstash.config.source.multilocal] Ignoring the 'pipelines.yml' file because modules or command line options are specified

[2025-11-07T20:48:37,640][INFO ][logstash.agent ] No persistent UUID file found. Generating new UUID {:uuid=>"985e0c89-79c6-4003-82c0-6bdb775327ca", :path=>"/usr/local/logstash/data/uuid"}

[2025-11-07T20:48:38,274][INFO ][logstash.agent ] Successfully started Logstash API endpoint {:port=>9600, :ssl_enabled=>false}

[2025-11-07T20:48:38,498][INFO ][org.reflections.Reflections] Reflections took 149 ms to scan 1 urls, producing 138 keys and 481 values

[2025-11-07T20:48:38,780][INFO ][logstash.javapipeline ] Pipeline `main` is configured with `pipeline.ecs_compatibility: v8` setting. All plugins in this pipeline will default to `ecs_compatibility => v8` unless explicitly configured otherwise.

[2025-11-07T20:48:38,821][INFO ][logstash.javapipeline ][main] Starting pipeline {:pipeline_id=>"main", "pipeline.workers"=>2, "pipeline.batch.size"=>125, "pipeline.batch.delay"=>50, "pipeline.max_inflight"=>250, "pipeline.sources"=>["config string"], :thread=>"#<Thread:0x10c96784 /usr/local/logstash/logstash-core/lib/logstash/java_pipeline.rb:134 run>"}

[2025-11-07T20:48:39,485][INFO ][logstash.javapipeline ][main] Pipeline Java execution initialization time {"seconds"=>0.66}

[2025-11-07T20:48:39,504][INFO ][logstash.javapipeline ][main] Pipeline started {"pipeline.id"=>"main"}

The stdin plugin is now waiting for input:

[2025-11-07T20:48:39,516][INFO ][logstash.agent ] Pipelines running {:count=>1, :running_pipelines=>[:main], :non_running_pipelines=>[]}启动完了之后敲一下回车

我们随便敲一下命令

hello world

{

"message" => "hello world",

"event" => {

"original" => "hello world"

},

"type" => "stdin",

"@version" => "1",

"@timestamp" => 2025-11-07T12:52:59.130135321Z,

"host" => {

"hostname" => "logstash"

}

}出现这些信息就证明logstash没有问题了

配置输入和输出

生产中,Logstash管道要复杂一些,它通常具有一个或多个输入、过滤器和输出插件。

本部分中,将创建一个Logstash管道,该管道使用标准输入来获取Apache Web日志作为输入,解析这些日志以从日志中创建特定的命名字段,然后将解析的数据输出到标准输出(屏幕上)。并且这次无需在命令行上定义管道配置,而是在配置文件中定义管道。

创建任意一个文件并写入如下内容,作为 Logstash 管道配置文件

创建任意一个文件并写入如下内容,作为 Logstash 管道配置文件

创建任意一个文件并写入如下内容,作为 Logstash 管道配置文件

# vim /usr/local/logstash/config/first-pipeline.conf

input {

stdin { }

}

output {

stdout {}

}配置文件语法测试(配置文件调用)

配置文件语法测试(配置文件调用)

配置文件语法测试(配置文件调用)

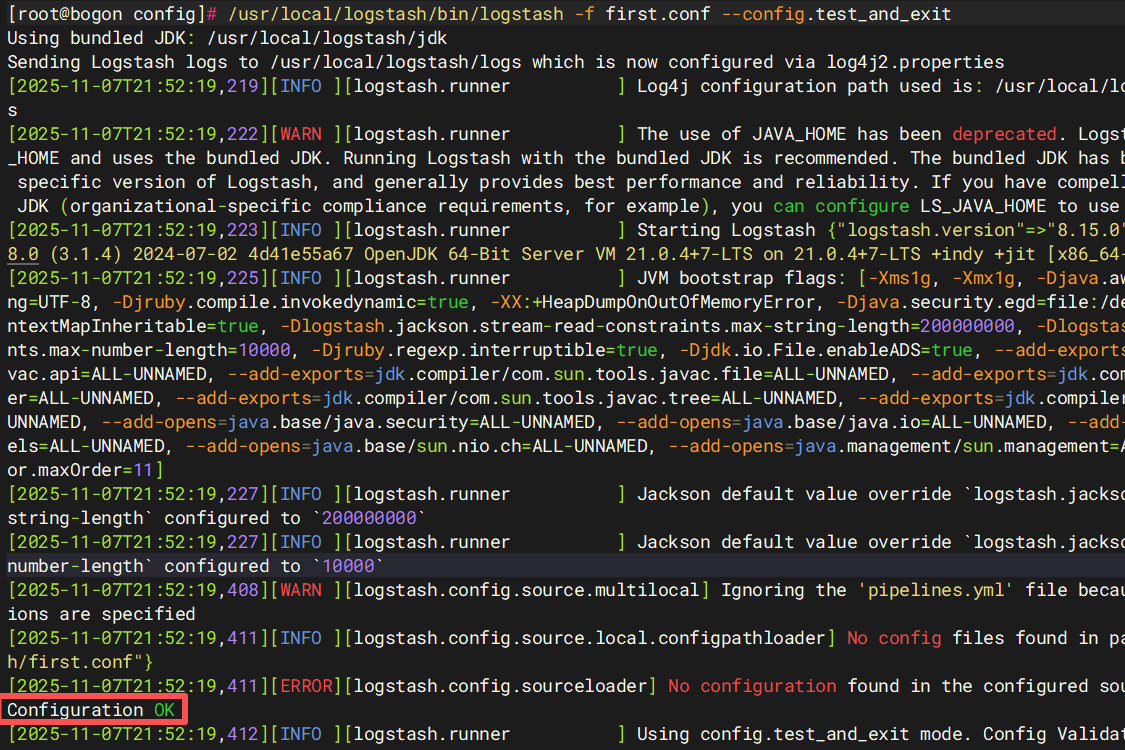

# bin/logstash -f config/first-pipeline.conf --config.test_and_exit

`-f` 用于指定管道配置文件。[root@bogon config]# /usr/local/logstash/bin/logstash -f first.conf --config.test_and_exit

Using bundled JDK: /usr/local/logstash/jdk

[root@bogon config]# /usr/local/logstash/bin/logstash -f first.conf --config.test_and_exit

Using bundled JDK: /usr/local/logstash/jdk

Sending Logstash logs to /usr/local/logstash/logs which is now configured via log4j2.properties

[2025-11-07T21:52:19,219][INFO ][logstash.runner ] Log4j configuration path used is: /usr/local/logstash/config/log4j2.properties

[2025-11-07T21:52:19,222][WARN ][logstash.runner ] The use of JAVA_HOME has been deprecated. Logstash 8.0 and later ignores JAVA_HOME and uses the bundled JDK. Running Logstash with the bundled JDK is recommended. The bundled JDK has been verified to work with each specific version of Logstash, and generally provides best performance and reliability. If you have compelling reasons for using your own JDK (organizational-specific compliance requirements, for example), you can configure LS_JAVA_HOME to use that version instead.

[2025-11-07T21:52:19,223][INFO ][logstash.runner ] Starting Logstash {"logstash.version"=>"8.15.0", "jruby.version"=>"jruby 9.4.8.0 (3.1.4) 2024-07-02 4d41e55a67 OpenJDK 64-Bit Server VM 21.0.4+7-LTS on 21.0.4+7-LTS +indy +jit [x86_64-linux]"}

[2025-11-07T21:52:19,225][INFO ][logstash.runner ] JVM bootstrap flags: [-Xms1g, -Xmx1g, -Djava.awt.headless=true, -Dfile.encoding=UTF-8, -Djruby.compile.invokedynamic=true, -XX:+HeapDumpOnOutOfMemoryError, -Djava.security.egd=file:/dev/urandom, -Dlog4j2.isThreadContextMapInheritable=true, -Dlogstash.jackson.stream-read-constraints.max-string-length=200000000, -Dlogstash.jackson.stream-read-constraints.max-number-length=10000, -Djruby.regexp.interruptible=true, -Djdk.io.File.enableADS=true, --add-exports=jdk.compiler/com.sun.tools.javac.api=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.file=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.parser=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.tree=ALL-UNNAMED, --add-exports=jdk.compiler/com.sun.tools.javac.util=ALL-UNNAMED, --add-opens=java.base/java.security=ALL-UNNAMED, --add-opens=java.base/java.io=ALL-UNNAMED, --add-opens=java.base/java.nio.channels=ALL-UNNAMED, --add-opens=java.base/sun.nio.ch=ALL-UNNAMED, --add-opens=java.management/sun.management=ALL-UNNAMED, -Dio.netty.allocator.maxOrder=11]

[2025-11-07T21:52:19,227][INFO ][logstash.runner ] Jackson default value override `logstash.jackson.stream-read-constraints.max-string-length` configured to `200000000`

[2025-11-07T21:52:19,227][INFO ][logstash.runner ] Jackson default value override `logstash.jackson.stream-read-constraints.max-number-length` configured to `10000`

[2025-11-07T21:52:19,408][WARN ][logstash.config.source.multilocal] Ignoring the 'pipelines.yml' file because modules or command line options are specified

[2025-11-07T21:52:19,411][INFO ][logstash.config.source.local.configpathloader] No config files found in path {:path=>"/usr/local/logstash/first.conf"}

[2025-11-07T21:52:19,411][ERROR][logstash.config.sourceloader] No configuration found in the configured sources.

Configuration OK

[2025-11-07T21:52:19,412][INFO ][logstash.runner ] Using config.test_and_exit mode. Config Validation Result: OK. Exiting Logstash显示Configuration OK 说明可以用了

启动 Logstash

启动 Logstash

启动 Logstash

尽量用绝对路径

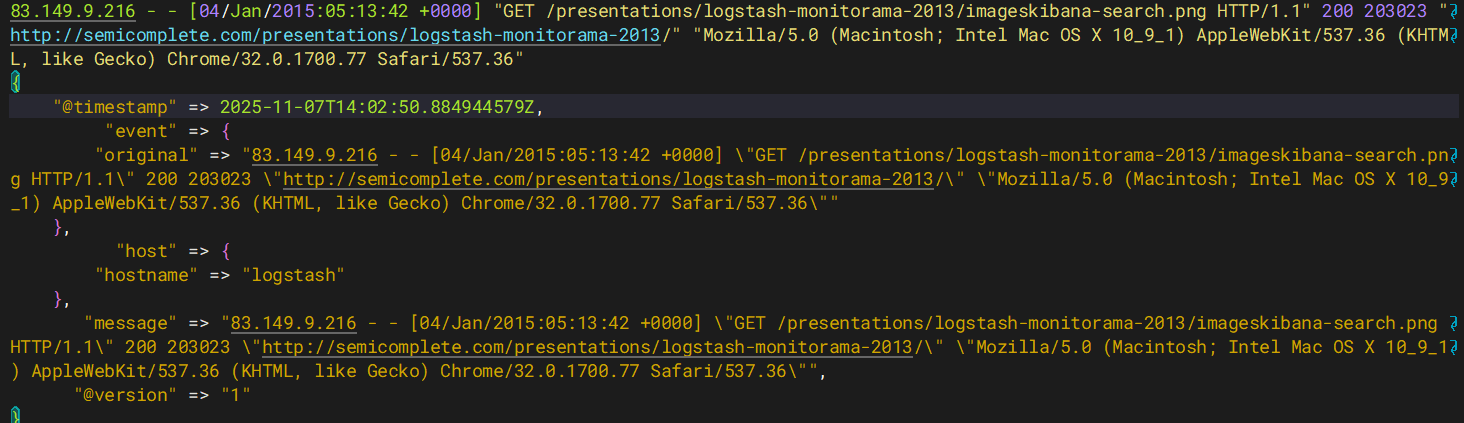

[root@bogon config]# /usr/local/logstash/bin/logstash -f /usr/local/logstash/config/first.conf启动后复制如下内容到命令行中,并按下回车键

启动后复制如下内容到命令行中,并按下回车键

启动后复制如下内容到命令行中,并按下回车键

83.149.9.216 - - [04/Jan/2015:05:13:42 +0000] "GET /presentations/logstash-monitorama-2013/imageskibana-search.png HTTP/1.1" 200 203023 "http://semicomplete.com/presentations/logstash-monitorama-2013/" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36"将会看到如下输出

将会看到如下输出

将会看到如下输出

83.149.9.216 - - [04/Jan/2015:05:13:42 +0000] "GET /presentations/logstash-monitorama-2013/imageskibana-search.png HTTP/1.1" 200 203023 "http://semicomplete.com/presentations/logstash-monitorama-2013/" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36"

{

"@timestamp" => 2025-11-07T14:02:50.884944579Z,

"event" => {

"original" => "83.149.9.216 - - [04/Jan/2015:05:13:42 +0000] \"GET /presentations/logstash-monitorama-2013/imageskibana-search.png HTTP/1.1\" 200 203023 \"http://semicomplete.com/presentations/logstash-monitorama-2013/\" \"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36\""

},

"host" => {

"hostname" => "logstash"

},

"message" => "83.149.9.216 - - [04/Jan/2015:05:13:42 +0000] \"GET /presentations/logstash-monitorama-2013/imageskibana-search.png HTTP/1.1\" 200 203023 \"http://semicomplete.com/presentations/logstash-monitorama-2013/\" \"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36\"",

"@version" => "1"

}

Grok过滤器插件

现在有了一个工作管道,但是日志消息的格式不是理想的。你想解析日志消息,以便能从日志中创建特定的命名字段。为此,应使用grok 过滤器插件,可以将非结构化日志数据解析为结构化和可查询的内容。

grok 会根据你感兴趣的内容分配字段名称,并把这些内容和对应的字段名称进行绑定。

grok 如何知道哪些内容是你感兴趣的呢?它是通过自己预定义的模式来识别感兴趣的字段的。可以通过给其配置不同的模式来实现。

这里使用的模式是 %{COMBINEDAPACHELOG},它使用以下模式从Apache日志中构造行:

创建测试示例日志文件

创建测试示例日志文件

创建测试示例日志文件

# vim /var/log/httpd.log

83.149.9.216 - - [04/Jan/2015:05:13:42 +0000] "GET /presentations/logstash-monitorama-2013/imageskibana-search.png HTTP/1.1" 200 203023 "http://semicomplete.com/presentations/logstash-monitorama-2013/" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36"确保没有缓存数据

确保没有缓存数据

确保没有缓存数据

[root@logstash file]# pwd

/usr/local/logstash/data/plugins/inputs/file

[root@logstash file]# ls -a

. .. .sincedb_aff270f7990dabcdbd0044eac08398ef

[root@logstash file]# rm -rf .sincedb_aff270f7990dabcdbd0044eac08398ef修改好的管道配置文件如下:

修改好的管道配置文件如下:

修改好的管道配置文件如下:

[root@logstash config]# pwd

/usr/local/logstash/config

[root@logstash config]# ls

first.conf jvm.options log4j2.properties logstash-sample.conf logstash.yml pipelines.yml startup.options

[root@logstash config]# vim secend.conf #注释方法#

input {

file { #调用`file`模块读取示例日志文件

path => ["/var/log/httpd.log"]

start_position => "beginning"

}

}

filter {

grok { #对 web 日志进行过滤处理,输出结构化的数据

match => { "message" => "%{COMBINEDAPACHELOG}" }

# 在 message 字段对应的值中查询匹配 COMBINEDAPACHELOG 模式的字段并进行映射

#在message字段实行格式转换

}

}

output {

stdout {}

}如果没有数据过滤,那就把中间的filter删掉就可以了

就相当于,从哪里来的数据输出到哪里区就完事了

启动filebeat

启动filebeat

启动filebeat

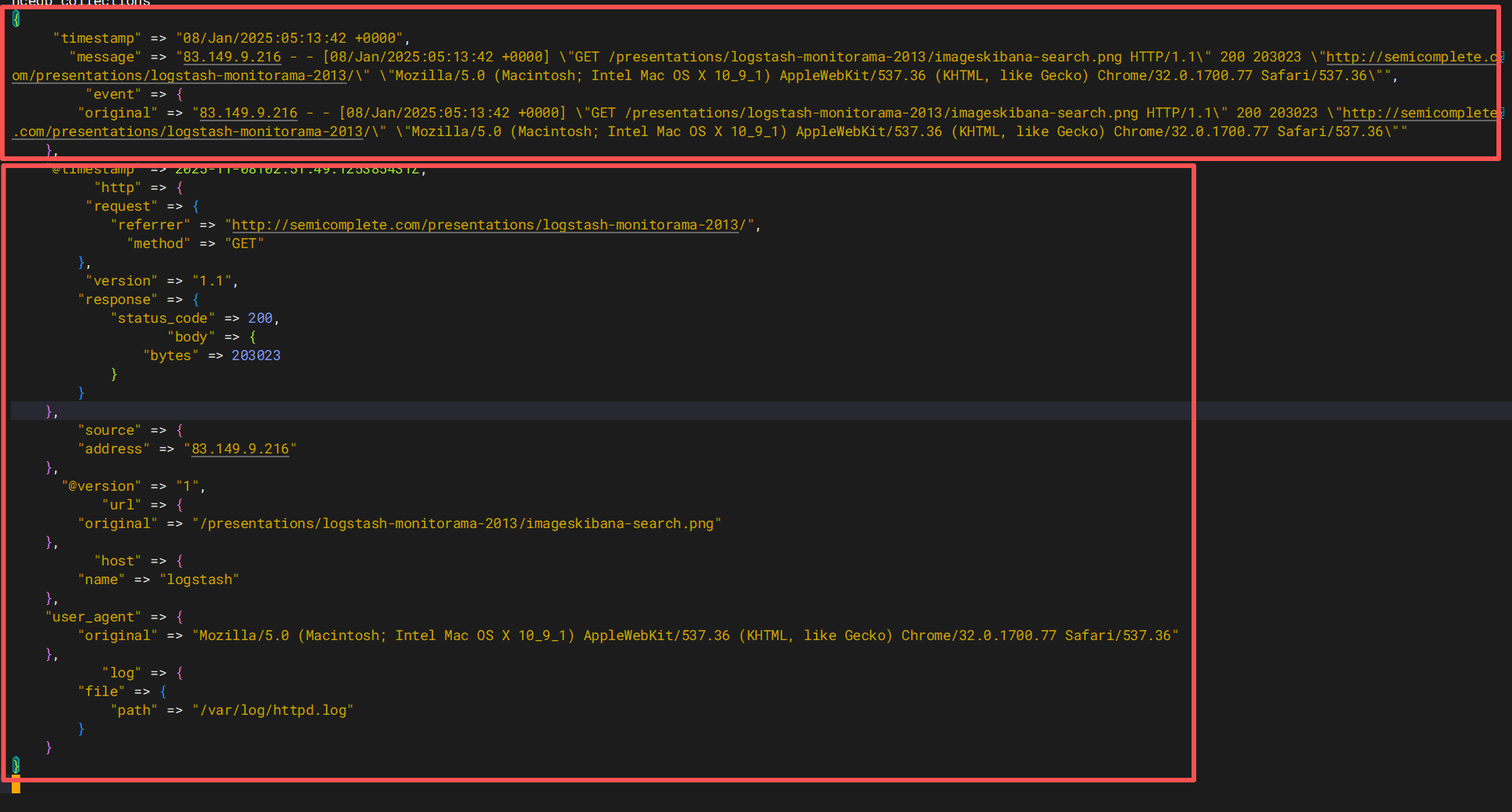

[root@bogon config]# /usr/local/logstash/bin/logstash -f /usr/local/logstash/config/secend.conf {

"timestamp" => "08/Jan/2025:05:13:42 +0000",

"message" => "83.149.9.216 - - [08/Jan/2025:05:13:42 +0000] \"GET /presentations/logstash-monitorama-2013/imageskibana-search.png HTTP/1.1\" 200 203023 \"http://semicomplete.com/presentations/logstash-monitorama-2013/\" \"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36\"",

"event" => {

"original" => "83.149.9.216 - - [08/Jan/2025:05:13:42 +0000] \"GET /presentations/logstash-monitorama-2013/imageskibana-search.png HTTP/1.1\" 200 203023 \"http://semicomplete.com/presentations/logstash-monitorama-2013/\" \"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36\""

},

"@timestamp" => 2025-11-08T02:51:49.125385431Z,

"http" => {

"request" => {

"referrer" => "http://semicomplete.com/presentations/logstash-monitorama-2013/",

"method" => "GET"

},

"version" => "1.1",

"response" => {

"status_code" => 200,

"body" => {

"bytes" => 203023

}

}

},

"source" => {

"address" => "83.149.9.216"

},

"@version" => "1",

"url" => {

"original" => "/presentations/logstash-monitorama-2013/imageskibana-search.png"

},

"host" => {

"name" => "logstash"

},

"user_agent" => {

"original" => "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_9_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/32.0.1700.77 Safari/537.36"

},

"log" => {

"file" => {

"path" => "/var/log/httpd.log"

}

}

}

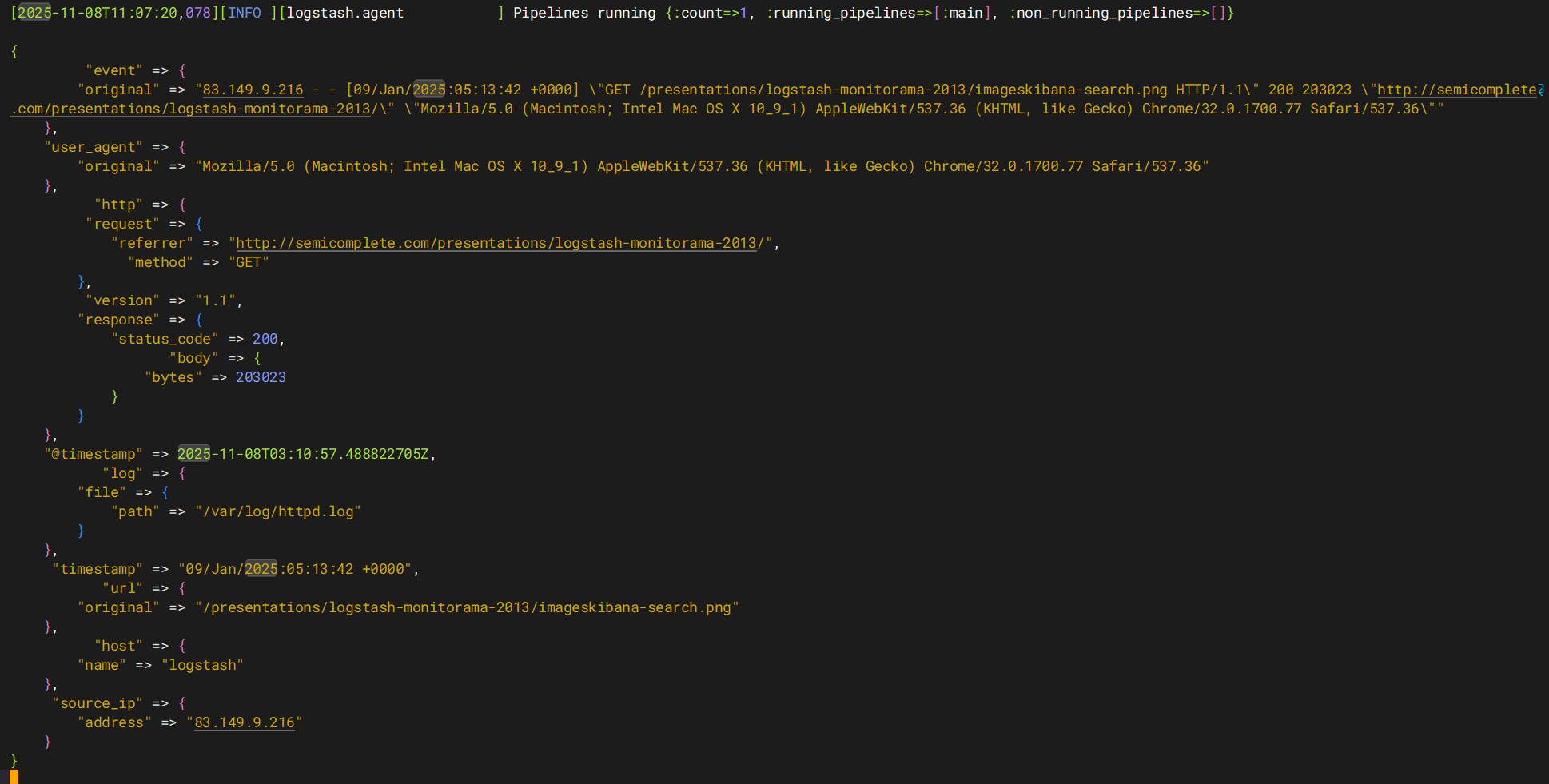

上面是以前的格式,下面是更改后的格式(上面跟下面内容都是一样的,只是格式不一样)

你会发现原来的非结构化数据,变为结构化的数据了。

细心的你一定发现原来的 message 字段仍然存在,假如你不需要它,可以使用 mutate 中提供的选项: remove_field 来移除这个字段。 remove_field 可以移除任意的字段,它可以接收的值是一个数组。

rename可以重新命名字段

修改后管道配置文件如下:

input {

file {

path => ["/var/log/httpd.log"]

start_position => "beginning"

}

}

filter {

grok {

match => { "message" => "%{COMBINEDAPACHELOG}" }

}

mutate {

rename => { #重写字段

"source" => "source_ip"

}

}

mutate {

remove_field => ["message","@version"] #去掉没用字段

}

}

output {

stdout {}

}修改一下second.conf文件之后,启动

[root@logstash config]# /usr/local/logstash/bin/logstash -f /usr/local/logstash/config/secend.conf

这样我们就可以把原来的message删除

使用Geoip插件定位ip地理位置

GeoIP插件是一个常用的插件,用于将IP地址转换为地理位置信息。GeoIP插件根据来自Maxmind GeoLite2数据库的数据,为IP地址添加有关地理位置的信息,如国家、城市、经纬度等。这个插件在Logstash中扮演着重要的角色,尤其是在需要分析日志数据中的IP地址地理位置时。

插件官方地址注册账号并下载数据库

Download GeoIP Databases | MaxMind![]() https://www.maxmind.com/en/accounts/1059410/geoip/downloads

https://www.maxmind.com/en/accounts/1059410/geoip/downloads

火山引擎开发者社区是火山引擎打造的AI技术生态平台,聚焦Agent与大模型开发,提供豆包系列模型(图像/视频/视觉)、智能分析与会话工具,并配套评测集、动手实验室及行业案例库。社区通过技术沙龙、挑战赛等活动促进开发者成长,新用户可领50万Tokens权益,助力构建智能应用。

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)